Retrieval Practice: Why Testing Yourself Changes What You Know

Most people who have heard of retrieval practice understand it as a form of self-assessment. You study something, then you quiz yourself to check whether you learned it. The quiz is the measurement. The studying is the learning.

That framing is wrong. This matters, because it shapes how people use the strategy, how they evaluate whether it's working, and ultimately whether they stick with it when it stops feeling productive.

Retrieval is not a readout. The act of pulling information out of memory, reconstructing it rather than just recognizing it, changes the memory itself. It strengthens the pathways that lead to it, reorganizes how it connects to related knowledge, and makes future access faster and more reliable. Testing doesn't just measure learning. Testing is learning. And for long-term retention, it is among the most powerful forms of learning we have evidence for.

If that sounds familiar, you may already be nodding. You've heard this before. You may even practice it. But there are three things the standard conversation about retrieval practice leaves out, and they're the difference between using the strategy superficially and using it well.

It Didn't Just Beat Rereading. It Beat One of the Best Active Strategies.

The study most people cite when talking about retrieval practice involves students who reread a passage multiple times versus students who practiced recalling it. The recall group retained far more a week later. That finding appeared in the last post, and it's worth knowing, but it sets up a framing problem: it makes retrieval practice sound like the antidote to passivity. Stop rereading. Start testing yourself. Be active.

The problem with that framing is that it implies any active strategy should work. Concept mapping, mind mapping, summarizing in your own words: these are all active. They all require effort. They should all produce better results than passively rereading.

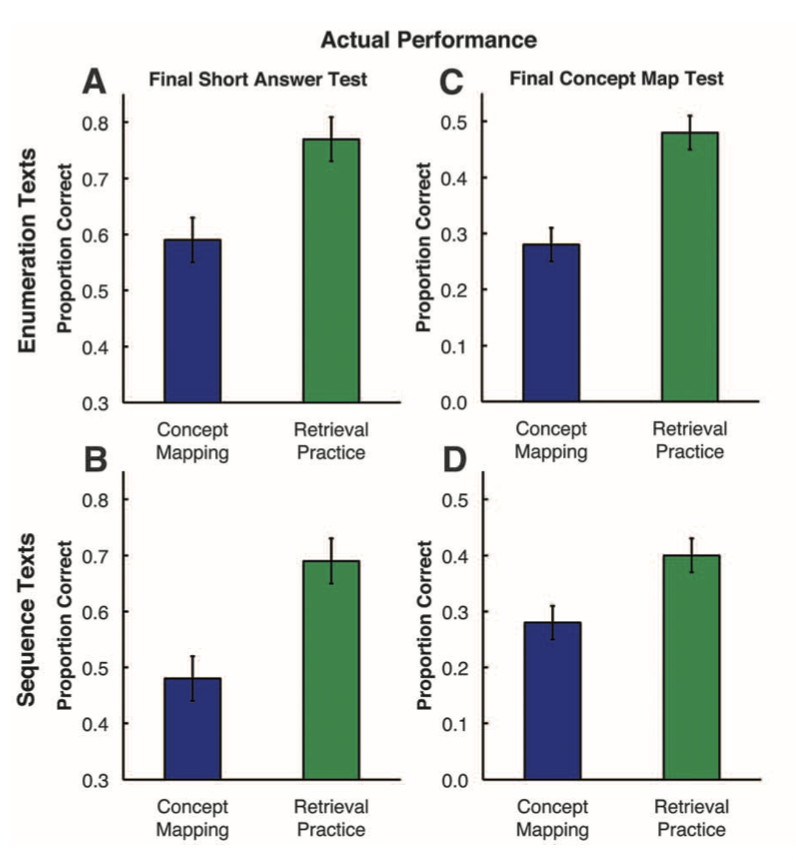

In 2011, a study published in Science tested that assumption directly. Students either practiced retrieval (studying a text, then recalling everything they could from memory) or practiced elaborative concept mapping (studying the same text, then constructing a diagram that organized the key concepts and their relationships while viewing the source material). Concept mapping is not a passive strategy. It requires learners to identify main ideas, generate connections, and organize knowledge into a coherent structure. It engages exactly the kinds of deep processing that elaboration research says should improve learning.

One week later, students who practiced retrieval scored roughly fifty percent higher than students who practiced concept mapping on both factual and inference questions. The researchers then expanded on this, through a second experiment using 120 students and a within-subject design, asking students utilize concept mapping for one science text, and retrieval practice for a separate text. This is powerful methodology, as it helps minimize both differences that may exist between students based on their preexisting preferences or aptitude for one method, as well as the effects of the type of information being learned. They then tested students with two different final test formats: half took a short-answer test and half were asked to create a concept map of the two texts they read. This is another methodological strength, as it aims to evaluate whether students in the first experiment performed better with retrieval practice mainly because of the similarity of this method to the method of assessment on the final test.

In this experiment, 84% of students performed better after retrieval practice. Yet when asked to predict which strategy would work better, 75% of students favored concept mapping. The most striking detail: even when the final test required students to build concept maps from memory, a format that should have given every advantage to the group that practiced concept mapping, retrieval practice still produced better results.

This is not a story about passive versus active. It's a story about what kind of cognitive work produces durable memory. Concept mapping enriches how you represent information during study. Retrieval practice forces you to reconstruct that information from scratch, using only what your memory can produce. The reconstruction is the mechanism, and it operates through a different pathway than elaboration does.

The Mechanism: What Retrieval Actually Does to Memory

When you try to recall something, your brain doesn't simply replay a stored recording. It searches through long-term memory, follows cue-based pathways, and reassembles the target information from whatever fragments it can access.

Retrieval practice strengthens memory through two distinguishable pathways. The first is direct: the act of retrieval itself modifies the memory trace. Each successful retrieval strengthens the routes that led to it, making those pathways more reliable on the next attempt. The second is subtler, and still partly theoretical. When you have dozens of related concepts stored in memory, successful recall depends on how well your retrieval cues distinguish the target from its neighbors. Karpicke and Blunt, drawing on Nairne's work on cue discriminability, proposed that retrieval practice may sharpen this precision, not by adding new information to the memory, but by narrowing the field of competitors that a given cue activates. Re-reading does not appear to produce the same effect.

Brain imaging research points to something more specific. In one study, Wing, Marsh, and Cabeza (2013) used fMRI, a technology that shows which brain regions become active during a task, to watch participants learn word pairs in two different ways: by restudying them, and by testing themselves on them. A day later, those same participants took a final memory test. When the researchers went back to the brain scans, the word pairs that had been successfully remembered after testing showed activity in a specific set of regions during the original practice: the hippocampus (the brain's main memory-forming structure), parts of the temporal lobe (which processes meaning), and a part of the prefrontal cortex (which helps connect new information to what you already know).

The surprising part is that these aren't "retrieval regions." They're the same regions the brain uses when it is first learning something. In plainer terms: when you test yourself, your brain doesn't just pull a stored answer out of a filing cabinet. It reruns the same machinery it used to learn the material in the first place. Each successful retrieval is, in effect, a second learning event.

A second study by Eriksson and colleagues (2011) added a complementary finding. Their participants learned Swahili–Swedish word pairs through repeated rounds of study and self-testing the day before being scanned. Some pairs ended up being recalled successfully many times during practice; others only once or twice. The next day, in the scanner, the researchers tracked how brain activity for each specific word pair varied depending on how many times that pair had been successfully retrieved beforehand.

The pattern was clean. The more times a pair had been successfully retrieved, the more activity appeared in a region called the anterior cingulate cortex (ACC) during the test, and the less activity appeared in regions the brain typically uses for effortful problem-solving and self-monitoring. That's the opposite of what you'd expect if the brain were simply working harder. The authors' interpretation is that repeated retrieval triggers a process called consolidation: the slow biological work of moving fragile new memories into long-term storage. The ACC is one of the regions that drives that process.

The most striking detail came five months later. The same participants returned for another memory test, and the size of each person's ACC response during the original scan predicted how much they still remembered nearly half a year on (a correlation of 0.85, which is remarkably strong for brain research). Few findings in the learning literature link a single brain measurement to retention at that timescale.

Together, these two studies tell a coherent story. Testing yourself doesn't just exercise retrieval. It re-engages the brain's learning machinery (Wing) and pushes it into the consolidation work that turns short-term memories into lasting ones (Eriksson). Retrieval doesn't just measure memory. It restructures it.

So far, everything described is the direct benefit of retrieval: what the act of recall does to the memory trace itself. There is also a second, indirect benefit: retrieval reveals what you don't know. A failed retrieval attempt is diagnostic. It identifies gaps, errors, and material that needs additional work. This function is especially valuable when paired with feedback. A systematic review of nineteen studies in health professions education highlighted that feedback enhanced the benefits of testing and helped prevent the consolidation of incorrect responses. But the direct benefit operates even without feedback. In the original Roediger and Karpicke experiments, students never saw the passage again after attempting recall. Retrieval still produced substantially better retention than full re-exposure to the material. Feedback amplifies retrieval practice. It does not create the effect.

“Testing yourself doesn't just exercise retrieval. It re-engages the brain's learning machinery”

This Works Outside the Lab

A question worth asking about any learning strategy: does it hold up when the material is real, the stakes are real, and the time interval is months rather than days?

For retrieval practice, the answer is unusually clear. The Green and colleagues review mentioned earlier also took on this question directly. Across nineteen controlled studies in medical, nursing, and allied health education, 21 of 23 retention outcomes favored retrieval practice over matched study conditions. The effect held across short-answer questions, multiple-choice formats, simulation-based assessments, and standardized patient encounters, with retention intervals ranging from one week to six months. The overall effect size was large.

One study illustrates the pattern well. Pediatric and emergency medicine residents attended an interactive teaching session on two neurological emergencies. Afterward, they were randomly assigned: one group took repeated short-answer tests with feedback at two-week intervals, while the other group studied matched review sheets on the same schedule. Six months later, the tested group scored 39 percent on the final assessment compared to 26 percent for the study group, an average advantage of 13 percentage points, despite the study group receiving focused, spaced review sessions that far exceeded what most residents would do on their own. The effect size was large (d = 0.91).

The review also found something important about transfer: all seven transfer outcomes across three studies favored retrieval practice, including performance on standardized patient assessments and clinical reasoning tasks. The evidence for transfer is thinner than the evidence for retention, and most of it represents near transfer rather than the kind of far transfer in which learners recognize deep structural similarities across different domains. But the direction is consistent and encouraging. Retrieval practice doesn't just help you remember what you studied. It helps you use it.

What You're Probably Getting Wrong

If you've heard of active recall and you're already doing some version of it, here's the question worth asking: what did you do before you started retrieving?

Tools like Anki and question banks are genuinely effective forms of retrieval practice. When you see a prompt and produce the answer from memory before checking, that's production-based retrieval, the kind the research shows is most beneficial. The tool isn't the problem.

The problem is what often gets skipped before the retrieval begins. Many students encounter new material and move to flashcards almost immediately, treating the cards as both the learning and the review. But a flashcard built on a shallow first pass can only strengthen a shallow representation. You retrieve the fact correctly, the algorithm moves on and the card is "mature," but the knowledge behind it is isolated, disconnected from the mechanisms and relationships that would make it usable under pressure.

Retrieval practice strengthens whatever representation exists in memory, including flawed ones. If a concept was misunderstood or only partially encoded, retrieval may consolidate that incomplete version rather than correct it. Feedback helps, but it cannot fully compensate for absent structure. This is why encoding well matters before retrieval enters the picture.

This is not an argument against flashcards or question banks. These tools are among the most practical and evidence-supported ways to implement retrieval practice. But they work best as the second step, not the first. The first step is building a representation worth strengthening: engaging with the material deeply enough to understand why something works, how it connects to what you already know, and where it sits within a larger framework. Then retrieve. The encoding determines the ceiling. Retrieval determines how long you keep what you built.

The Ceiling: What Retrieval Practice Cannot Do

Here's something the standard conversation almost never mentions: retrieval practice can make things worse.

If your initial understanding of a concept is shallow, confused, or inaccurate, retrieval practice can consolidate that poor representation. You recall the wrong version, the wrong version gets strengthened, and now the error is more firmly embedded than it was before. Feedback helps, since it can catch and correct errors before they become entrenched, but it doesn't fully compensate for the absence of good initial encoding.

This is why retrieval practice is primarily a durability strategy. It strengthens and partially re-elaborates what you've built, but it's a poor substitute for encoding that didn't happen in the first place. A concept that was richly connected to prior knowledge, clearly differentiated from related ideas, and anchored by meaningful structure benefits enormously from repeated retrieval. A concept that was memorized as an isolated fact, without understanding why it works or how it connects to anything else, receives a smaller boost because there's less structure to strengthen.

The practical implication is straightforward: don't skip the understanding step. Before you start retrieving, make sure you've done the work of actually building a coherent representation. Read the material carefully. Ask yourself why it works. Connect it to things you already know. Then close the book and retrieve. The quality of what you encoded determines the ceiling on what retrieval practice can accomplish.

What This Looked Like for Me

My first three months of medical school were spent tinkering. I tried different reading schedules, rereading routines, and summarization workflows, restructuring how I moved through the material every few weeks. None of it produced results I could distinguish from what I'd get by just showing up and paying attention. On my first four in-class exams, I scored at or only a few percentage points above the class average.

During that same period, I was experimenting with Anki, an open-source flashcard application built around spaced repetition. Anki is popular among medical students because publicly available decks exist that cover the full scope of material tested on the USMLE Step 1 and Step 2 exams. The tool automates the scheduling side of retrieval practice by presenting cards at expanding intervals based on how well you recall them. The real shift for me wasn't the software, though. It was what the software made visible.

When I switched from rereading to retrieval-based study, the feedback was immediate and uncomfortable. Cards I'd just studied came back blank. Topics I thought I understood fell apart the moment I had to produce the answer rather than recognize it. That discomfort was diagnostic in exactly the way the research predicts: it exposed the gap between what felt learned and what actually was.

By the fourth in-class exam, the pattern was clear enough that I committed fully. Passive strategies, regardless of how I structured them, were not producing durable retention. I built a schedule around three principles. First, I chose the highest-quality third-party resources I could find for my initial pass through the material: sources that presented the content accurately, with clear logical framing and well-organized structure, because the quality of that first encounter would determine what every subsequent retrieval attempt had to work with. Second, I used flashcards and practice questions not only as self-assessment but as a primary learning tool, treating them as retrieval events, not just evaluation methods. Third, I maintained a deliberate effort toward deep encoding, using elaborative strategies as I encountered new material rather than deferring understanding to a later review pass.

The schedule itself was straightforward: for each exam block, I would work through the relevant content and complete the associated Anki cards with seven to ten days remaining before the exam, then use those final days to review class-specific lectures. Over two years, I matured over 30,000 flashcards and completed just under 14,000 practice questions before sitting for Step 1. I did about 5,000 more flashcards, and another 7,000 practice questions in preparation for Step 2. After three years, I finished ranked 3rd among my medical school class of approximately 200, scored at the 99th percentile on my board exams (USMLE & COMLEX), and scored highest among my class in 4/6 of our third-year end-of-rotation shelf exams, earning the Dean's Award for Academic Achievement. Although the specific study tactics I've employed during residency have been dramatically different, the underlying principles remain unchanged, and I've maintained similar success on the annual orthopedic in-training exam (OITE).

The numbers matter less than what produced them. I know many students that completed similar volumes of flashcards and even practice questions in medical school. What I think made the difference was not the quantity of retrieval but how it was embedded in a broader strategy. The flashcards worked because I had encoded the material with enough depth to give retrieval something meaningful to strengthen. The practice questions worked because I used them as learning events, not just benchmarks. And the schedule worked because it created the conditions for spacing and repeated retrieval across weeks and months rather than days.

None of that was obvious at the start. The first three months sometimes felt like wasted time. In retrospect, they were the period where I learned, by failing to find a shortcut, that the strategies most students default to are the ones with the weakest evidence behind them.

“The numbers matter less than what produced them.”

The Core Lesson

Retrieval practice is not self-assessment. It is a learning event. The act of reconstructing knowledge from memory changes what you remember and how reliably you can access it, through mechanisms that are fundamentally different from those involved in re-studying, re-reading, or even other active strategies like concept mapping.

But retrieval alone is not enough. What you retrieve matters only as much as what you encoded in the first place. Flashcards and question banks are powerful retrieval tools, but they strengthen whatever representation you built. If that representation was shallow or disconnected, retrieval has little structure to strengthen. It will reinforce what's there, but it won't manufacture the connections that were never built.

The practical architecture is simple: study something carefully enough to understand it. Build the connections, ask why it works, situate it within what you already know. Then retrieve, using whatever tools fit the material and the volume. Expect retrieval to feel incomplete and uncertain at first; that's the reconstruction process at work, not a sign you're doing it wrong. Check your recall against the source. Note the gaps. Come back and retrieve again, ideally after some time has passed. And recognize that the strategies that feel most productive during study are almost always the ones that produce the least retention over time.

The next post covers when to come back: how the timing of your retrieval attempts determines whether the knowledge sticks for days or for months.

AceMedEd

This post is part of a series on the science of learning. Each post covers one evidence-based principle and how to apply it to your own studying. Follow us on Instagram @acemeded to keep up with future blog posts and related content

References

Dobson, J. L., Perez, J., & Linderholm, T. (2016). Distributed retrieval practice promotes superior recall of anatomy information. Anatomical Sciences Education, 10(4), 339–347.

Eriksson, J., Kalpouzos, G., & Nyberg, L. (2011). Rewiring the brain with repeated retrieval: A parametric fMRI study of the testing effect. Neuroscience Letters, 505(1), 36–40.

Green, M. L., Moeller, J. J., & Spak, J. M. (2018). Test-enhanced learning in health professions education: A systematic review: BEME Guide No. 48. Medical Teacher, 40(4), 337–350.

Karpicke, J. D., & Blunt, J. R. (2011). Retrieval practice produces more learning than elaborative studying with concept mapping. Science, 331(6018), 772–774.

Larsen, D. P., Butler, A. C., & Roediger, H. L. (2009). Repeated testing improves long-term retention relative to repeated study: A randomised controlled trial. Medical Education, 43(12), 1174–1181.

Nairne, J. S. (2002). The myth of the encoding–retrieval match. Memory, 10(5/6), 389–395.

Roediger, H. L., & Karpicke, J. D. (2006). Test-enhanced learning: Taking memory tests improves long-term retention. Psychological Science, 17(3), 249–255.

Wing, E. A., Marsh, E. J., & Cabeza, R. (2013). Neural correlates of retrieval-based memory enhancement: An fMRI study of the testing effect. Neuropsychologia, 51(12), 2360–2370.

Van Hoof, T. J., Madan, C. R., & Sumeracki, M. A. (2021). Science of learning strategy series: Article 2, retrieval practice. Journal of Continuing Education in the Health Professions, 41(2), 119–123.